Understanding the GenAI Terminologies

One of the biggest obstacles to getting started with GenAI is not understanding the basic terminologies.

Let’s cover the most important things to know about.

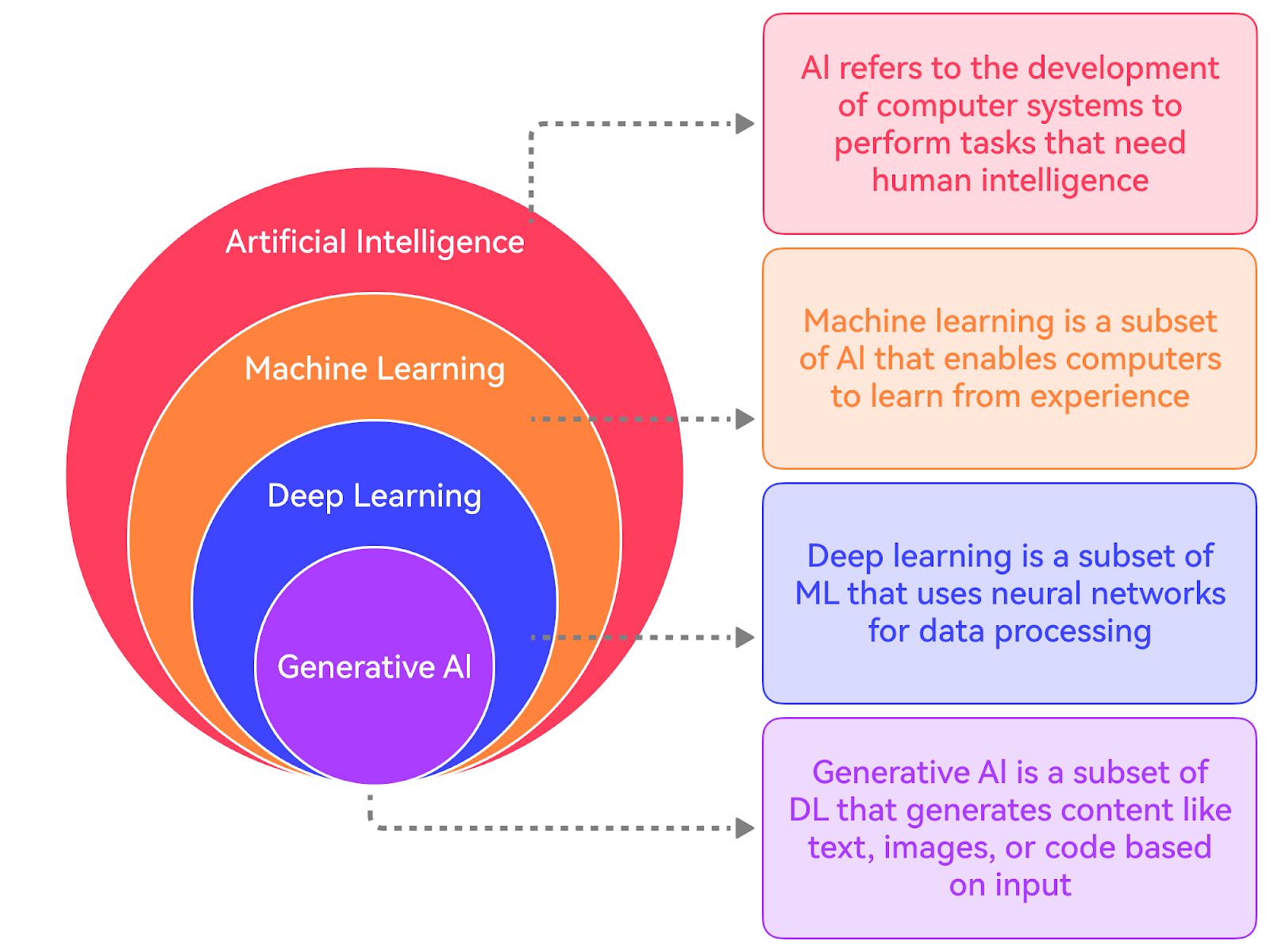

What is AI? (Think Like a Human Brain)

Artificial Intelligence is simply:

Teaching machines to think and act like humans

Real-world example:

- When you use Google Maps → it suggests the fastest route

- When Instagram shows reels you like → that's AI

- When ChatGPT answers your questions → that's AI

So AI is not magic. It’s just software trained to behave intelligently

Machine Learning (How Machines Learn Without Coding Rules)

Earlier programming was like:

"If this happens → do this"

But Machine Learning is different:

"Here is data → learn patterns yourself"

Real-world example:

Imagine teaching a child:

You show 100 photos of cats 🐱 The child learns: “this looks like a cat”

Now next time: The child identifies a new cat on its own

That’s exactly how ML works.

Types (simple version):

- Supervised → Teacher is there (labeled data)

- Unsupervised → No teacher (find patterns)

- Reinforcement → Learn by reward/punishment (like games)

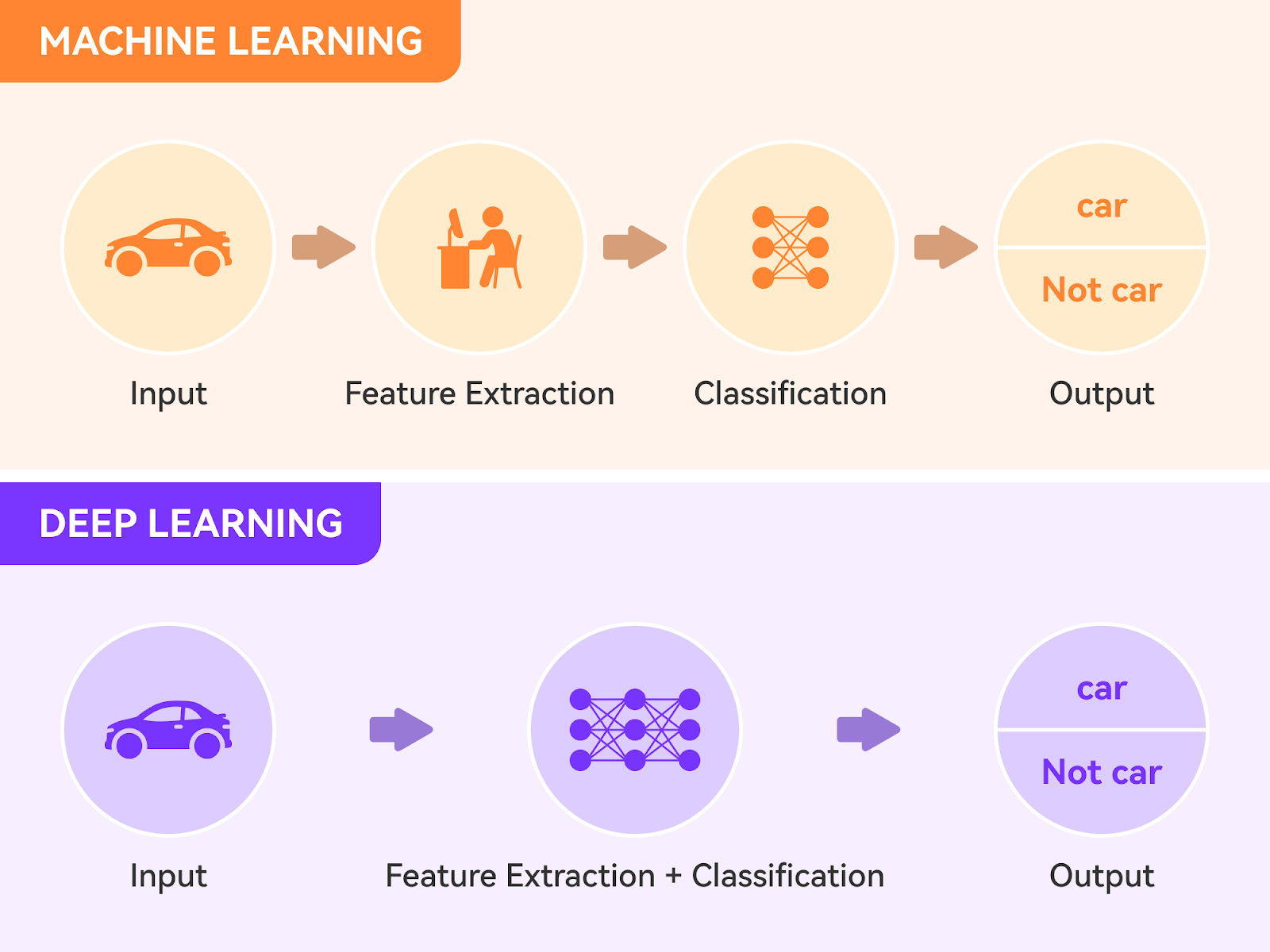

Deep Learning (Brain-like Learning)

Deep Learning is just:

Machine Learning + Neural Networks (brain-inspired)

Real-world example: Face unlock in your phone Voice assistants like Alexa and Siri Self-driving car vision

These require complex pattern understanding So we use Deep Learning.

Natural Language Processing (NLP)

NLP is a subfield of AI that focuses on enabling computers to understand, interpret, and generate human language.

It involves tasks such as text classification, sentiment analysis, entity recognition, machine translation, and text generation.

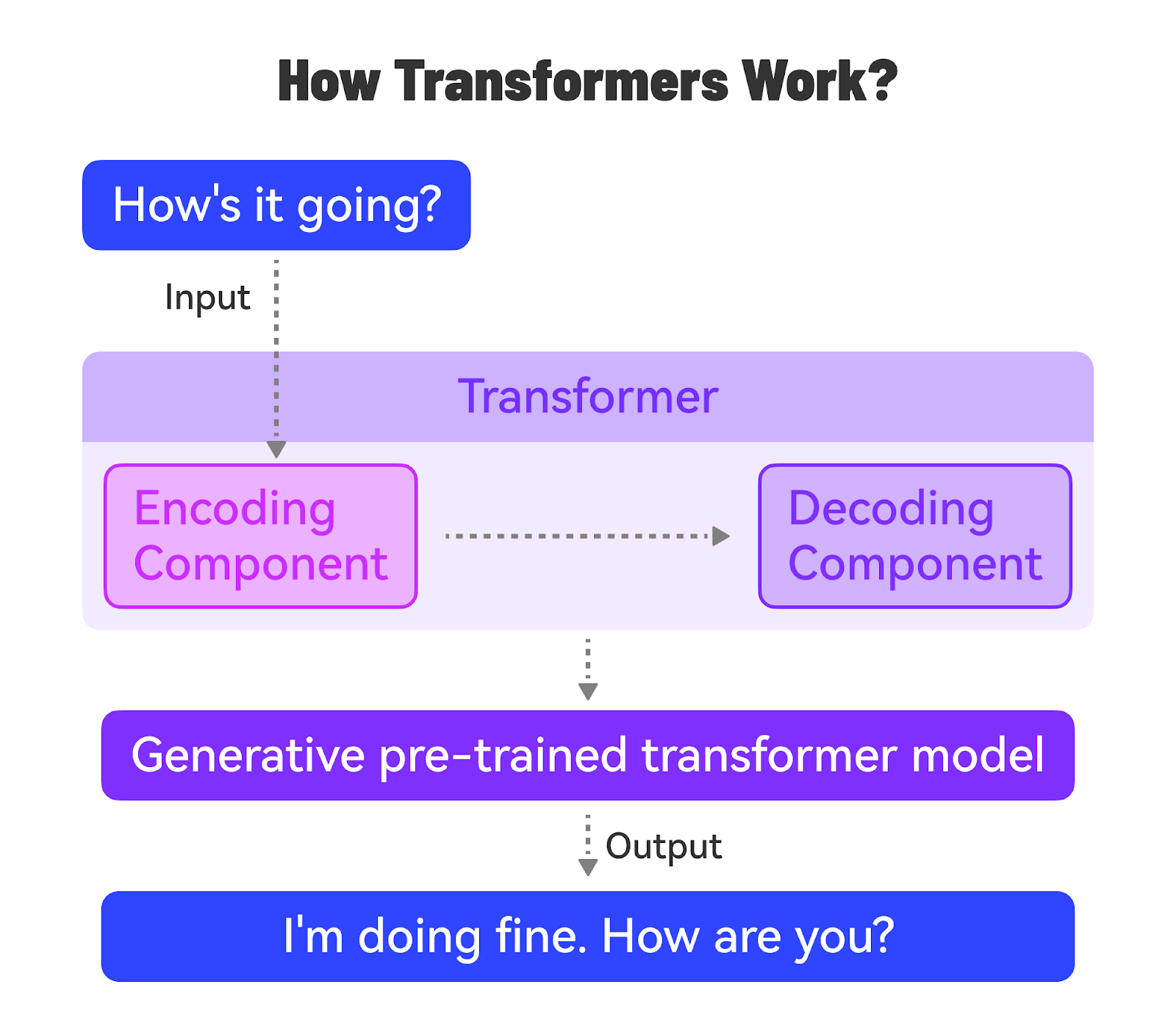

Deep learning models, particularly Transformer models, have revolutionized NLP in recent years.

Transformers (The Real Breakthrough)

This is where things changed.

Transformers are special models that:

Understand context, not just words

Example:

Sentence:

"I went to the bank"

Which bank?

River bank? Money bank?

Transformers look at the full sentence to understand meaning

That’s why modern AI feels smart

What is Generative AI (GenAI)?

Now the main thing.

Generative AI = AI that creates new things

Not just analyze it generates.

Real-world examples: ChatGPT → writes text Midjourney → creates images AI music tools → generate songs

It learns from data and creates something new but similar

Types of GenAI Models (Simple Breakdown)

- Text Models

- Chatbots

- Blog writing

- Code generation

Example: ChatGPT

- Image Models

- Generate images from text

“A dog riding a bike” → image created

- Audio Models

- Text → speech

- AI music

- Multimodal Models

- Text + Image + Audio together

- Example: upload image → ask question → get answer

Prompt Engineering

Prompt engineering is the practice of designing effective prompts to get desired outputs from GenAI models. It involves understanding the model’s capabilities, limitations, and biases.

Effective prompts provide clear instructions, relevant examples, and context to guide the model’s output.

Prompt engineering is a crucial skill for getting the most out of GenAI models.

Using the Model APIs

Most Generative AI (GenAI) models are accessible through REST APIs, which allow developers to integrate these powerful models seamlessly into their applications.

To get started, you'll need to obtain API access from the desired platform, such as Google’s Vertex AI, OpenAI, Anthropic, or Hugging Face.

Each platform has its process for granting API access, typically involving

-

Signing up for an account

-

Creating an API key

-

Completing a verification or approval process.

Once you have your API key, you can authenticate your requests to the GenAI model endpoints.

Authentication usually involves providing the API key in the request headers or as a parameter. It's crucial to keep your API key secure and avoid sharing it publicly.

It’s also important to follow best practices to ensure reliability and efficiency. Here are a couple of important best practices:

-

Handle API errors gracefully by checking the response status code.

-

Optimize API usage by carefully selecting the model parameters, such as the maximum number of tokens. This is necessary to balance the desired output quality with costs.

-

When making API requests, be mindful of the rate limits imposed by the platform. Rate limits determine the maximum number of requests you can make within a specific time frame. Exceeding the rate limits may result in API errors or temporary access restrictions.

-

Use frameworks and libraries like Langchain to simplify the API interactions. These frameworks offer high-level abstractions and utilities for working with GenAI model APIs.

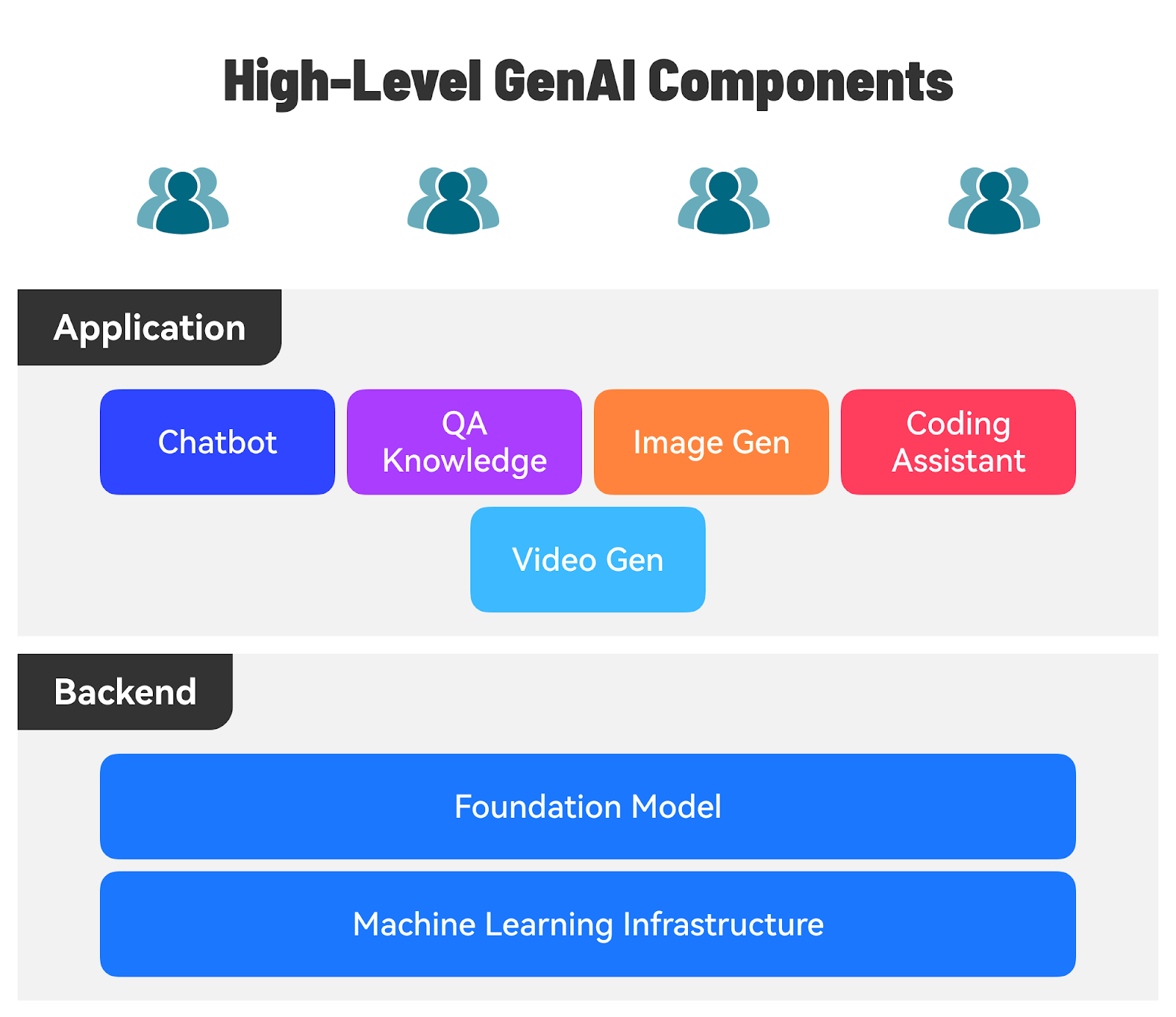

Building Application using the AI Model

There are several use cases for GenAI-powered applications across various domains:

-

Content Creation and Marketing: GenAI applications can help create outlines for articles, ad copy generation, and product descriptions.

-

Customer Support: AI-powered chatbots can understand user queries and provide accurate, context-aware responses.

-

Business and Finance: GenAI applications can help generate financial reports, summaries, or analyses based on company data.

-

Education and Learning: GenAI applications can generate customized learning material and explanations based on a student’s learning style.

Making Models Your Own

There is significant interest in making models more adaptable and customizable to suit the specific needs of the domain.

Let’s look at the main techniques to achieve this goal.

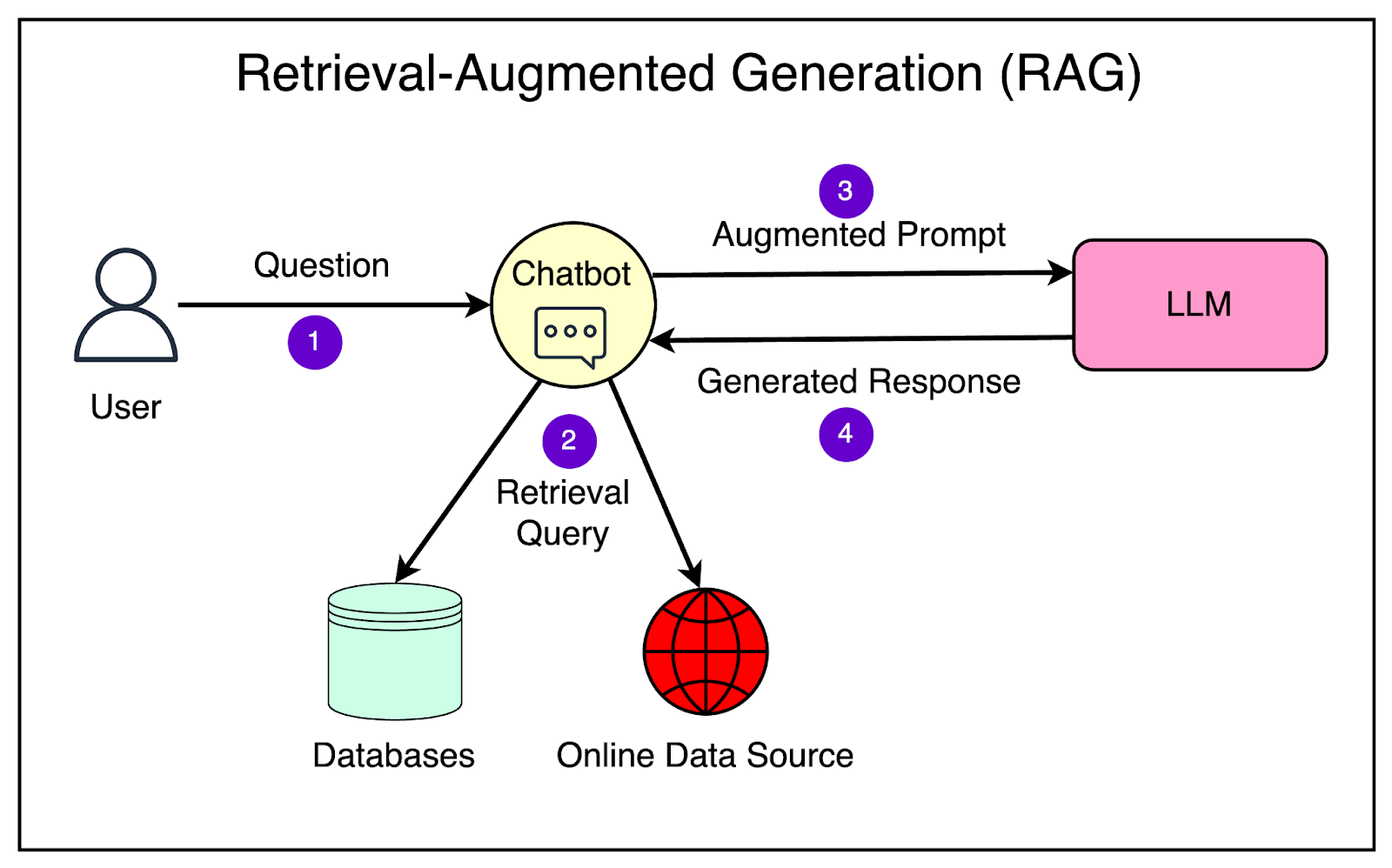

Retrieval-Augmented Generation (RAG)

RAG is a technique that helps improve the accuracy and relevance of the generated responses based on your use case.

It allows your LLM to have external information sources like your databases, documents, and even the Internet in real time. This way the LLM can get the most up-to-date and relevant information to answer the queries specific to your business.

Here’s a high-level overview of how a RAG system works:

-

The user poses a question to the RAG system.

-

The retrieval component searches the knowledge corpus using the question as a query and retrieves the most relevant passages or documents.

-

The retrieved passages go through the augmentation step where this information is fed as input to the large language model. This step is crucial as it augments the model’s knowledge with relevant context from external sources.

-

The language model processes the input and generates an answer by combining the information from the retrieved passages and its base knowledge.

-

The generated answer is returned to the user.

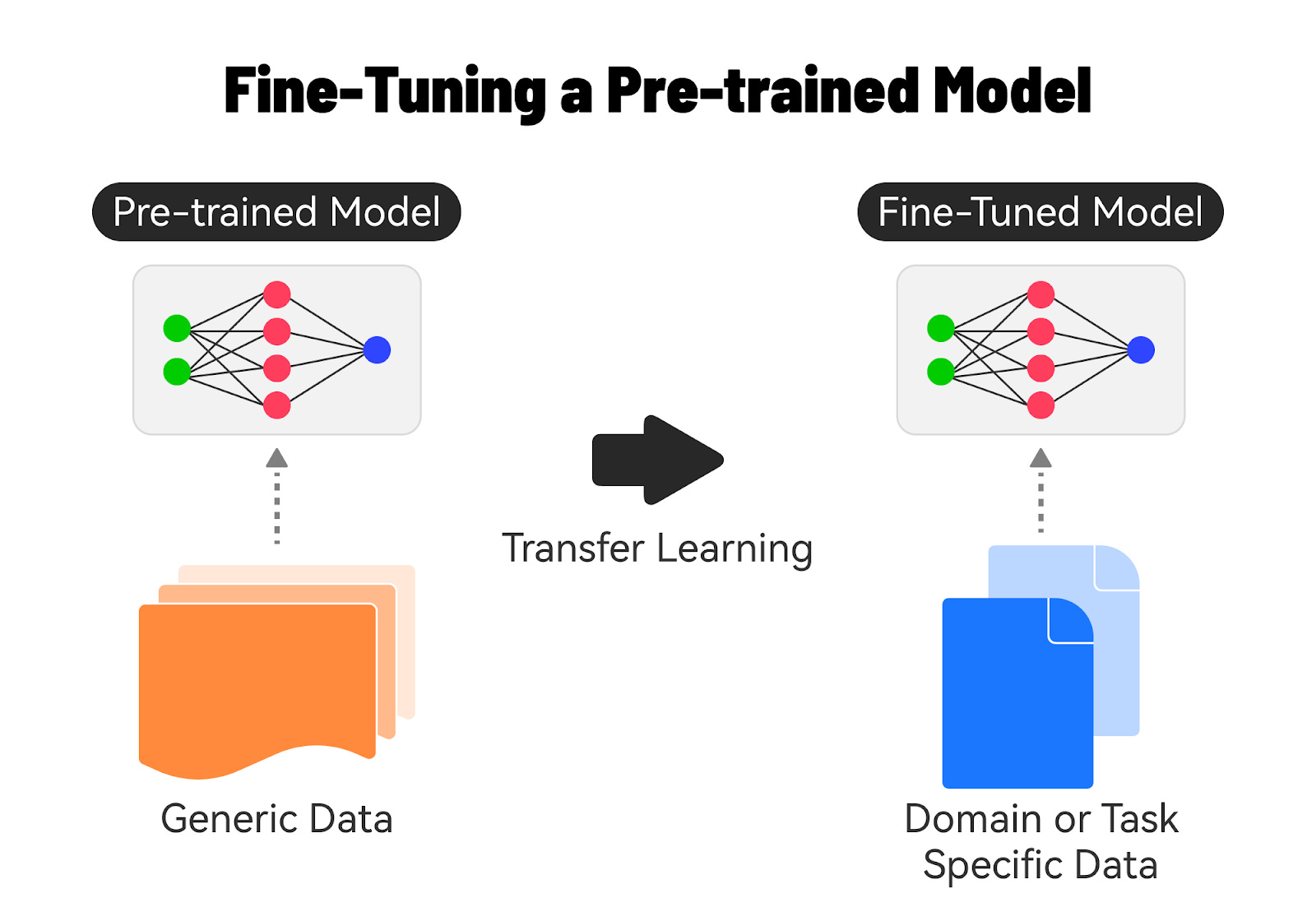

Fine-Tuning AI Models

Fine-tuning a base model on domain-specific data is a powerful technique to improve the performance and accuracy of AI models for specific tasks or industries.

Let’s understand how it’s done.

1 - Understanding Base Models

Base models, also known as pre-trained models, are AI models that have been trained on large, general-purpose datasets.

These models have learned general knowledge and patterns from the training data, making them versatile and applicable to a wide range of tasks.

Examples of base models include Google’s BERT and GPT, which have been trained on massive amounts of text or image data.

2 - The Need for Fine-Tuning

While base models are powerful, they may not always perform optimally for specific domains or tasks.

The reasons for fine-tuning a foundation model are as follows:

-

Adding a specific task (such as code generation or content generation) to the foundation model.

-

Generating responses based on your company’s proprietary dataset.

-

Adapting to the unique vocabularies, writing styles, or data distribution that might differ in your specific use case.

-

Reducing hallucination, which is output that is not factually correct or reasonable.

Fine-tuning allows us to adapt the base model to better understand and generate content specific to a particular domain.

3 - Fine-Tuning Process

The fine-tuning process consists of several steps such as:

-

Data Preparation: Collect a dataset that is representative of the target domain or task while ensuring that it is of sufficient size and quality. Preprocess the data to match the input requirements of the base model.

-

Model Initialization: Start with the pre-trained base model that is most suitable for the target task. Load the pre-trained weights of the base model.

-

Training: Feed the domain-specific dataset into the modified base model and train the model using techniques like transfer learning. Fine-tune the model’s parameters by backpropagating the errors and updating the weights based on the domain-specific data.

-

Evaluation and Iteration: Evaluate the fine-tuned model’s performance on a validation set from the domain-specific data. Based on the metrics, iterate on the fine-tuning process.

4 - Benefits of Fine-Tuning

There are significant benefits to fine-tuning:

-

It allows the model to capture the nuances and characteristics of the target domain, leading to better accuracy and performance on domain-specific tasks.

-

Starting with a pre-trained base model, fine-tuning requires less training data and computational resources than training a model from scratch.

-

Fine-tuning enables the model to leverage the knowledge learned from the general-purpose training data and adapt it to the specific domain.

Conclusion

In conclusion, getting started with Generative AI is an exciting journey that opens up a world of possibilities for developers and businesses alike.

By understanding the key concepts, exploring the available models and APIs, and following best practices, you can harness GenAI's power to build innovative applications and solve complex problems.

Whether you're interested in natural language processing, image generation, or audio synthesis, there are numerous GenAI models and platforms to choose from. You can create highly accurate and efficient AI solutions tailored to your specific needs by leveraging pre-trained models and fine-tuning them on domain-specific data.